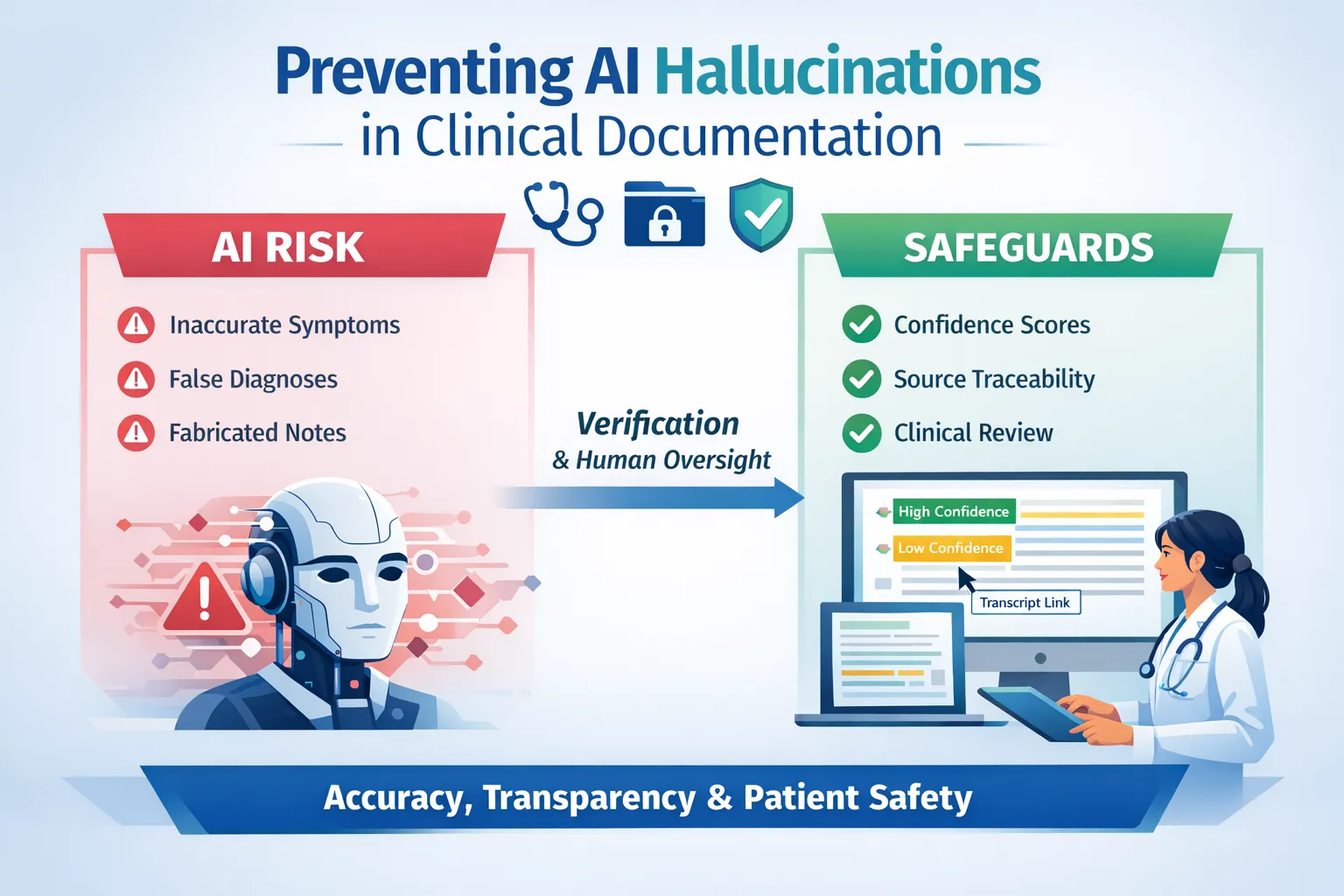

AI-generated documentation is increasingly being used to summarize clinical encounters and patient records, and this is when accuracy has become a central concern. There is a constant risk that AI-generated notes may include details that were never stated or documented. This is what researchers call an AI hallucination, and in healthcare settings, these fabrications cause serious risks to patient safety.

Clinicians are no strangers to such risks. Every chart, referral, and patient handout must be accurate, defensible, and grounded in what was actually said or documented. As AI documentation tools enter clinical workflows, one concern consistently rises to the top: hallucinations in AI.

Understanding where hallucinations come from and how confidence scoring reduces the risk is essential for responsible AI adoption.

What Are AI Hallucinations?

At a technical level, AI hallucinations occur when language models generate information that is statistically likely, but not factually grounded in the input. In clinical documentation, this can show up as:

- A symptom implied but never stated

- A diagnosis framed as more definitive than discussed

- A medication reference inferred from context

- A summary statement not traceable to source material

This is why unmanaged hallucinations in AI represent a real clinical risk, not because AI is “making decisions,” but because unverified text can quietly enter the record.

The Industry-Wide Concern

The concern about AI-generated misinformation isn’t limited to healthcare. Surveys indicate that about 77% of businesses are worried about AI producing hallucinated outputs, meaning the phenomenon isn’t just academic, but recognized widely across industries.

For clinical practices, this translated to a simple reality: AI tools require verification systems, not blind trust.

The Scale of the Problem

Research published by Cem Dilmegani at AIMultiple shows that hallucination rates in popular large language models range from about 15% to over 52%, depending on task and model architecture.

This wide variation underscores how AI medical scribes can carry different hallucination risks. AI confidence scoring helps clinicians navigate this complexity by flagging uncertain outputs for review.

How Confidence Scores Function as a Safety Net

AI confidence scoring provides a measurable way to validate accuracy by assigning a numerical value to reflect how certain the AI system extracts and generating content.

In documentation-first AI platforms, confidence scoring works alongside human verification to create multiple layers of protection. Confidence scoring primarily happens when ICD-10 codes are scored, allowing doctors to determine the source of the code using traceability and a visual indicator (like "green") to show the AI's confidence based on the source sentence. Furthermore, for tasks like medical record summarization from PDF uploads, the confidence score determines how well the PDF has been converted to text, flagging potential issues due to illegibility. or unclear text.

This allows practices to benefit from AI efficiency while maintaining the verification-first approach essential for patient safety.

How Confidence Scoring Improves Document Verification

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Building Trust Through Transparency and Compliance

Documentation-first AI platforms designed for clinical risk management pair confidence scoring with additional safeguards. Source traceability, or the use of traceable AI clinical notes, provides the ability to click on any section of a generated note and see the exact audio transcript, which provides verification beyond numerical scores alone. This glass-box AI approach addresses concerns about AI systems that generate plausible-sounding but potentially misleading content.

It is also essential for systems with protected health data to follow HIPAA-compliant security, encryption, access controls, and audit logs.

Responsible AI Adoption for Healthcare Practices

Hallucinations in AI are an inherent limitation of current machine learning technologies, which is why human verification remains essential. Prioritizing diverse training data, maintaining human oversight, continuous monitoring, and regulatory frameworks are crucial for gaining trust in AI technologies in healthcare.

With proper safeguards like confidence scoring, source traceability, and clinician verification workflows, practices can benefit from AI’s efficiency benefits while being protected from any consequence that occurs due to hallucinations in AI.

The future of clinical documentation is not about eliminating human judgment, but it’s about giving clinicians better tools to focus that judgment where it’s needed most.